You really must establish how to do evaluation before you decide what systems to use. This is not always that simple. Consider the old parable:

The Man in the Yard

Nasrudin was walking past a house on the road, when he saw a man in the yard who kept looking at different spots on the ground, but never picked up anything.

“What are you looking for?” he asked the man.

“I’m looking for my keys,” the man said. “But they’re not here. They’re in the house.”

“Then why are you looking for them in the yard?”

“Because it’s dark in the house. The light is better out here.”

Evaluation data presents a similar dilemma. The most important results are the hardest to measure. Determining whether a grantee’s efforts make any lasting change in a community is much harder than counting how many people show up at a meeting.

A Way to Get Organized

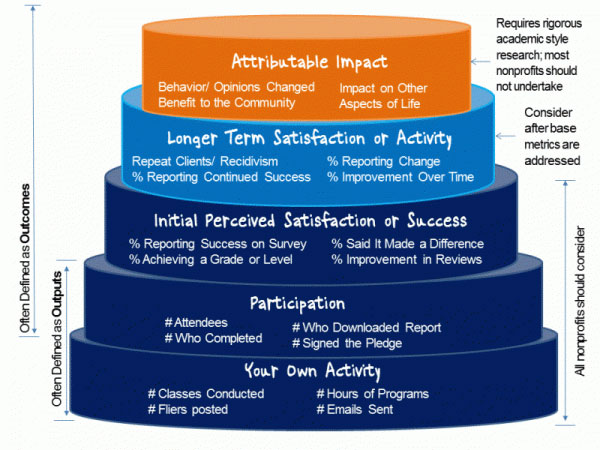

The nice people at Idealware have put together a hierarchy of outcomes around which to organize these issues. Here it is:

A discussion of the hierarchy, in an article sponsored by TechSoup, points out how measuring a foundation’s own activity is relatively simple. The data, such as how many grants you’ve awarded or how much money you’ve put out, can be captured from the grants management system, a calendaring system, or perhaps something from accounting. Possibly, the analysis can be done in Excel.

By the time you get to the Participation level, however, more effort is required. This involves what grantees did. How many people participated in their programs? What were their demographics? You need to collect the information from grantees, store it and analyze it. If your grants management system can aggregate data from progress reports submitted on forms, this shouldn’t be too hard. Reporting the results, however, can be an issue for some grants management systems. You may need extra analysis from something like SAP Crystal Reports.

To measure Perceived Satisfaction or Success, survey research, or something like it, probably will be necessary. Since question wording affects survey results so much, how to standardize this across grantees and projects becomes an issue. You may end up coding the results, therefore making judgments about their meaning. The database may not be the issue; data entry methods might be. You might want to use optical character recognition technology to process paper questionnaires.

Up the Hierarchy

To be brief, on up the hierarchy we go, with each stage having different challenges and requiring different technology. At the top, where you try to measure impact, you will face how to sort out your intervention from all the other variables that can affect impact. What if you are trying to measure job creation, and the economy goes south during the project? Probably the best approach is to outsource the analysis to highly trained professionals.

You really won’t know what data analysis capacity you should adopt until you’ve decided what you will ask it to do. You must figure out the project, before you adopt the technology. And since you’re buying a system for multiple projects, over several years, hopefully, you need to put some boundaries on those, too.

The Start of I.T. Strategic Planning

Planning evaluation is like what some groups face when moving all their files to the cloud and realizing that the content should be reorganized. Suddenly they need to address questions completely different from traditional IT. That can be a paralyzing moment. The trick is to get a framework, or, at least, a consultant, to guide you through the upstream non-technological issues before you commit to the technology. Your overall I.T. Strategic Planning will improve.

0 Comments

Trackbacks/Pingbacks